On a project I'm working on we are using PgCat as the PostgreSQL frontend. We chose it mainly based on gut feeling as pgbouncer seems a bit dated, although it would have arguably been the safe choice.

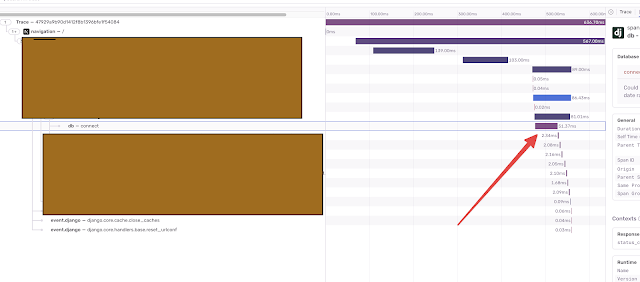

I was looking into the connection times using our tracing tool (Sentry) and noticed that establishing connections takes about 50ms.

That is a bit slow, right?

It was easy enough to confirm that it is indeed very slow. Establishing a direct connection to the mostly idle Postgres is in the sub-5ms range.

I quickly found a ticket about connection slowness, hinting that the problem could be related to TCP_NODELAY.

Essentially, it disables Nagle's algorithm, which batches small packets together. I guess that establishing connections from the client to PgCat is such a light process that the extra buffering is actively harmful.

And sure enough, after upgrading PgCat, we see sub 5ms connection times.

So why use PgCat at all? For us, it is for scaling purposes but not for load distribution. Our applications do not bombard the DB with a massive amount of queries but open long-lasting connections that might do only a few short ones. Pooling those together not only saves resources on the PG side but also enables us to sidestep the issue of maximum connections, which we want to keep low.

Comments

Post a Comment